For agencies managing creative production, the primary friction point isn’t just generating a single high-quality image; it is maintaining visual coherence across a fragmented media mix. A campaign that looks cohesive on a landing page can quickly lose its identity when translated into fifteen different aspect ratios for social ads, display banners, and video interstitials. Generative AI has promised to solve this volume problem, but without a disciplined operational framework, it often introduces «style drift»—a phenomenon where the aesthetic delta between assets becomes wide enough to dilute brand recognition.

Scaling production requires moving beyond one-off prompting and toward a repeatable pipeline. This guide examines how teams are using Banana AI to bridge the gap between high-volume output and strict brand consistency, focusing on tactical workflows rather than theoretical capabilities.

The Architecture of Visual Coherence

The first mistake most teams make in generative production is treating every asset as a fresh start. In a traditional agency workflow, you would have a «master key» or a mood board that dictates lighting, texture, and color palettes. In an AI-driven environment, this «key» needs to be codified through specific models and seeds.

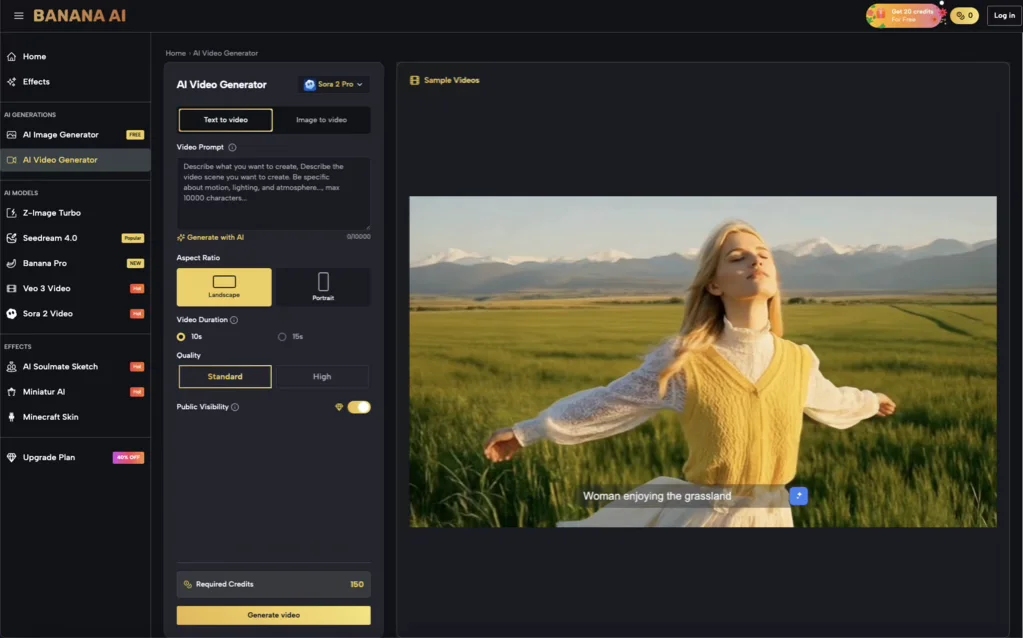

Within the Banana AI ecosystem, consistency is achieved by selecting a base model that aligns with the desired output—whether that is the hyper-realism of Z-Image Turbo or the more artistic leaning of Seedream 4.0. Operationalizing this means documenting which model is the «source of truth» for a specific client. If a brand requires a high-contrast, cinematic look, the production lead must mandate that all sub-assets—regardless of the designer creating them—derive from that specific model’s architecture.

It is important to acknowledge a current limitation in generative workflows: cross-model consistency. If you generate a hero image using one model and attempt to create supporting assets using a different, «faster» model, the lighting math and texture rendering will almost certainly clash. High-volume teams must prioritize model lock-in over speed to ensure that a set of 50 assets actually feels like a single campaign.

Standardizing the Input Layer with Banana AI Image

Consistency begins at the prompt level, but it is solidified through technical parameters. When using Banana AI Image, teams should move away from descriptive prose and toward modular prompt structures. A modular prompt separates the «subject» from the «style,» «lighting,» and «camera specs.»

For example, an agency working on a luxury travel account might create a «Style String» that is appended to every prompt: “shot on 35mm, golden hour lighting, muted earth tones, minimalist composition, f/2.8.” By standardizing this string, any team member can generate a new asset—be it a luggage set for a Facebook ad or a landscape for a blog header—that retains the same photographic DNA.

Leveraging Image-to-Image for Iterative Scaling

The Image-to-Image (Img2Img) function is often underutilized in professional settings, yet it is the most effective tool for maintaining spatial consistency. When a team has a winning layout for a landing page hero, they can use that image as a structural reference for secondary assets.

By adjusting the «strength» or «denoising» levels, creators can keep the composition and color weights of the original while changing specific elements. This is particularly useful for global campaigns where the subject matter might need to change (e.g., localizing the background of an ad for different regions) while keeping the visual «vibe» and brand placement identical.

Managing Multi-Format Output: From Static to Motion

Modern campaigns are rarely static. The transition from a still image to a 6-second social bumper is usually where consistency breaks down. Using the video generation capabilities within Banana AI, teams can maintain the aesthetic continuity established in the static phase.

The «Image-to-Video» workflow is the bridge here. Rather than prompting a video from scratch—which introduces a high degree of randomness—operators should use the finalized, brand-approved static asset as the first frame. This ensures that the textures, characters, and lighting environments are carried over directly into the motion asset.

However, there is a reality check needed for video production: temporal consistency. While current models like Veo 3 have made significant strides, generating long-form coherent motion remains a challenge. For agency teams, the most effective use of AI video is currently in short-form, high-impact visuals—cinemagraphs, atmospheric backgrounds, or product reveals—rather than narrative storytelling with complex character movements.

The Efficiency of Model Selection: Nano vs. Pro

In a production environment, time is a literal cost. Banana AI provides a variety of models, such as Nano Banana for rapid iteration and Banana Pro for high-fidelity final renders. A common tactical error is using high-resource models for the brainstorming or «thumb-nailing» phase.

An efficient agency workflow typically follows a tiered approach:

- Exploration: Use Nano Banana for rapid-fire visual testing. This allows the creative team to burn through 100 variations of a concept without exhausting credits or waiting on long render queues.

- Selection: Identify the core «hero» direction.

- Production: Move to Banana Pro or specialized models like Z-Image Turbo for the final high-resolution assets.

This tiered approach also helps in managing the «uncanny valley» and quality control. Higher-tier models are generally better at handling complex prompts, but they still require a human eye to check for anatomical errors or nonsensical textures that can occur in the background of dense images.This tiered approach also helps in managing the «uncanny valley» and quality control. Higher-tier models are generally better at handling complex prompts, but they still require a human eye to check for anatomical errors or nonsensical textures that can occur in the background of dense images.

Navigating the Limits of AI-Driven Brand Consistency

Despite the advancements in Banana AI Image and its associated models, there are moments of uncertainty that every production lead must account for. One significant hurdle is typography. While models are improving at rendering text, relying on an AI to generate a specific font or a perfectly spelled headline is still a high-risk strategy.

The practical judgment here is to generate the visual background and «vibes» using Banana AI, but to leave the typography and logo placement for traditional post-production tools like Photoshop or Illustrator. Trying to force an AI to handle 100% of the asset—including brand-compliant typography—often results in more «fix-it» time than simply compositing the elements manually.

Another expectation-reset involves character consistency. If a campaign features a recurring person or mascot, achieving a 100% likeness across 20 different poses and environments purely through prompting is difficult. Teams should expect to use techniques like «In-painting» or manual retouching to bridge the gap between a 90% likeness and a 100% brand-standard match.

Resource Management: Credits and Throughput

For a solo creator, a few failed prompts are a minor annoyance. For an agency, they are a margin killer. Operationalizing Banana AI requires a «Check Twice, Generate Once» mentality. This involves:

- Prompt Validation: Testing style strings on a small scale before launching a batch of 50.

- Credit Budgeting: Assigning credit limits to specific projects or team members to prevent «infinite scroll» syndrome, where a designer spends hours chasing a perfect image that isn’t required for the brief.

- Asset Tagging: Maintaining a shared internal library of successful prompts and their corresponding seeds so that the team isn’t constantly reinventing the wheel.

The Human-in-the-Loop Requirement

The goal of using Banana AI in an agency setting isn’t to replace the designer, but to remove the «grunt work» of resizing, minor variations, and basic asset generation. The final 10% of any asset—the color grading, the final crop, and the quality assurance—must remain a human-led process.

The most successful teams are those that view these tools as a «production assistant» that can handle 80% of the heavy lifting. By acknowledging the limitations—such as occasional artifacts in high-detail areas or the need for manual text overlays—agencies can build a pipeline that is both incredibly fast and consistently high-quality.

Ultimately, scaling visual assets is an exercise in constraint. By locking in models within the Banana AI platform, standardizing prompt structures, and utilizing Image-to-Image workflows, agencies can deliver multi-channel campaigns that feel like a singular, coherent brand story rather than a collection of disconnected AI experiments. Success in this space is less about the «magic» of the prompt and more about the discipline of the process.

Daniel Geyne

Fotógrafo, periodista y columnista en Yaconic. Experto en la escena musical alternativa y subgéneros como el Trip Hop, Metal y Punk Rock. Mi análisis, fundamentado en la Teoría Visual y Cinematográfica (Artes Visuales y Cine), me especializó en desglosar la estética underground. Con la perspectiva insider de mi trabajo en medios (Sabotage) y campañas publicitarias (Audi, Liverpool), te ofrezco una crítica única sobre cómo el arte, la contracultura y la imagen de marca interactúan.