The promise of generative AI for performance marketers has always been centered on the removal of friction. In theory, the ability to churn out dozens of high-quality ad creatives in an afternoon should be the ultimate competitive advantage. If you can test ten times as many hooks as your competitor, you should, mathematically, find a winner ten times faster.

However, the industry is currently hitting a wall. Performance marketing teams are discovering that speed without granular control creates a new kind of technical debt. When a workflow is optimized solely for the «generate» button, the result is often a flood of assets that are 80% correct but 100% unusable for a brand-sensitive campaign. This is the control crisis: the gap between what an AI Video Generator can produce in seconds and what a professional team actually needs to go to market.

The False Efficiency of the One-Click Solution

Most teams start their AI journey by looking for the fastest route from a text prompt to a finished file. On the surface, this makes sense. If a human editor takes four hours to cut a 15-second spot and a machine takes 60 seconds, the efficiency gain looks like a 240x improvement.

The reality is more complicated. In a speed-first workflow, the «creative» work is often just a lottery. You input a prompt, get an output, and if it isn’t right, you change a few words and try again. This cycle of «prompt-and-pray» is not a professional workflow; it is an endurance test. The time saved in production is frequently lost in the sheer volume of «near-miss» assets that require manual QA or extensive post-production fixing.

For a performance marketer, a video that is almost perfect but includes a warped logo or a strange anatomical hallucination is a liability. If your workflow doesn’t allow you to fix specific frames or maintain temporal consistency, you aren’t actually saving time—you are just moving the bottleneck from the editor’s desk to the creative director’s review queue.

The Hallucination Tax on High-Volume Campaigns

When teams prioritize speed, they often ignore the «hallucination tax.» This is the cost of verifying every single frame of a generated video for errors that could alienate a customer or violate a platform’s ad policy. At a small scale—one or two videos—this is manageable. At a scale of 500 variations for a global launch, it becomes impossible.

It is important to acknowledge that currently, no AI Video Generator is a «set it and forget it» solution for high-stakes brand work. There is a persistent uncertainty in how generative models handle physics, lighting, and brand assets over a sustained sequence. If a team ignores these limitations in favor of sheer output volume, they risk shipping content that feels «uncanny» or unprofessional, which can have a measurable negative impact on click-through rates and brand sentiment.

The Problem of Asset Drift

Speed-first workflows usually rely on a single, isolated tool for a single task. This leads to what we call «asset drift.» A team might use one tool for static images, another for voiceovers, and a third as their primary AI Video Generator. Because these tools don’t share a common «logic» or stylistic DNA, the final assets often feel disjointed.

In a performance marketing context, consistency is the foundation of trust. If your video hook uses a specific lighting style and color palette, but the mid-roll product shot looks entirely different because it was generated through a different pipeline or a less controllable model, the consumer’s subconscious «scam-dar» goes off. Control isn’t just about making the video look «cool»; it’s about ensuring the visual narrative remains cohesive across every touchpoint of the funnel.

Building a Workflow for Creative Direction, Not Just Production

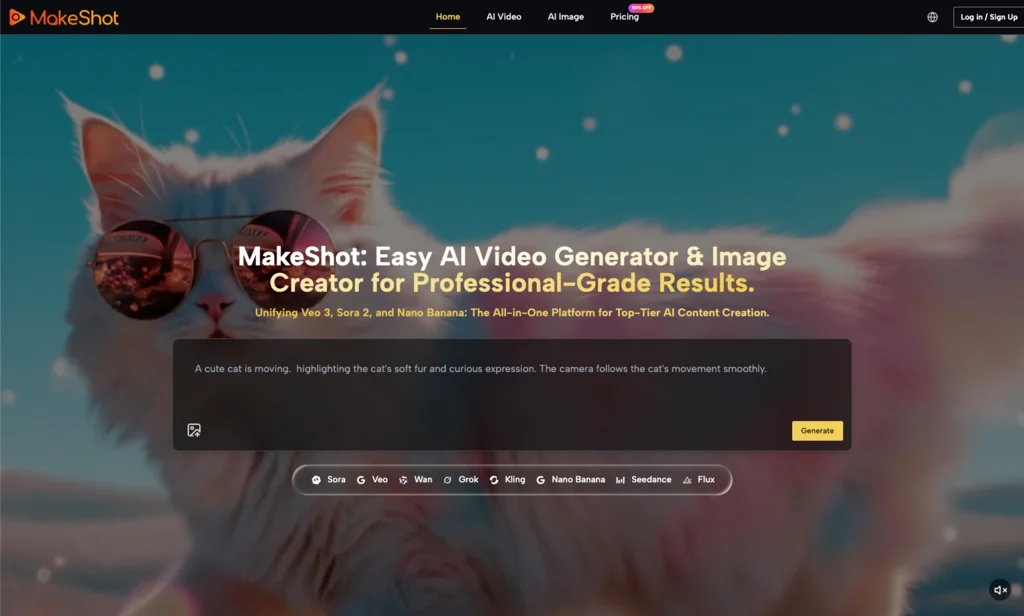

To solve the control crisis, teams need to stop treating AI as a replacement for the editor and start treating it as a new kind of camera and lens system. This requires moving toward platforms that unify disparate models into a single, controllable interface.

This is where a tool like AI Video Generator from MakeShot changes the math. Instead of jumping between disconnected labs, an operator-led workflow uses a unified platform to access top-tier models like Google Veo, Sora, or Kling within a consistent environment. The goal here isn’t just to generate «a video,» but to provide the operator with the specific model that fits the specific need—whether that is the hyper-realism required for a lifestyle shot or the stylized physics needed for a motion graphic.

By centralizing these capabilities, a performance marketer can maintain a higher degree of oversight. You aren’t just hitting «generate» and hoping for the best; you are selecting the engine that best understands the constraints of your brief. This reduces the iteration cycle from twenty random attempts to three or four targeted versions.

The Need for Human-in-the-Loop Realism

We must be honest about the current ceiling of technology. Even with the best AI Video Generator, complex narrative storytelling with specific, recurring human characters remains a significant hurdle. Teams that promise «full automation» of their creative pipeline usually end up with generic, soulless content that fails to convert because it lacks the nuance of human timing and emotional resonance.

The mistake many teams make is trying to automate the thinking alongside the *making*. Successful creative operations leads understand that AI is a tool for high-velocity execution of human-led strategy. If you try to remove the human element to save a few more minutes of production time, you lose the «why» behind the creative, and the data will eventually reflect that loss in performance.

Evaluating Tools for Commercial Viability

When auditing a workflow for scale, look past the «wow» factor of a single cherry-picked demo. A commercially viable AI Video Generator should be evaluated on three pillars of control:

- Temporal Consistency: Does the subject remain the same from frame one to frame sixty? If the lighting shifts or the background warps uncontrollably, the speed of generation is irrelevant because the output is unusable.

- Model Diversity: Can the tool switch between different «engines»? Not every ad needs the same aesthetic. A workflow that locks you into one specific model’s «look» will eventually lead to creative stagnation.

- UI for Operators: Is the platform built for an editor who needs to tweak parameters, or is it a «black box» that offers no levers for adjustment?

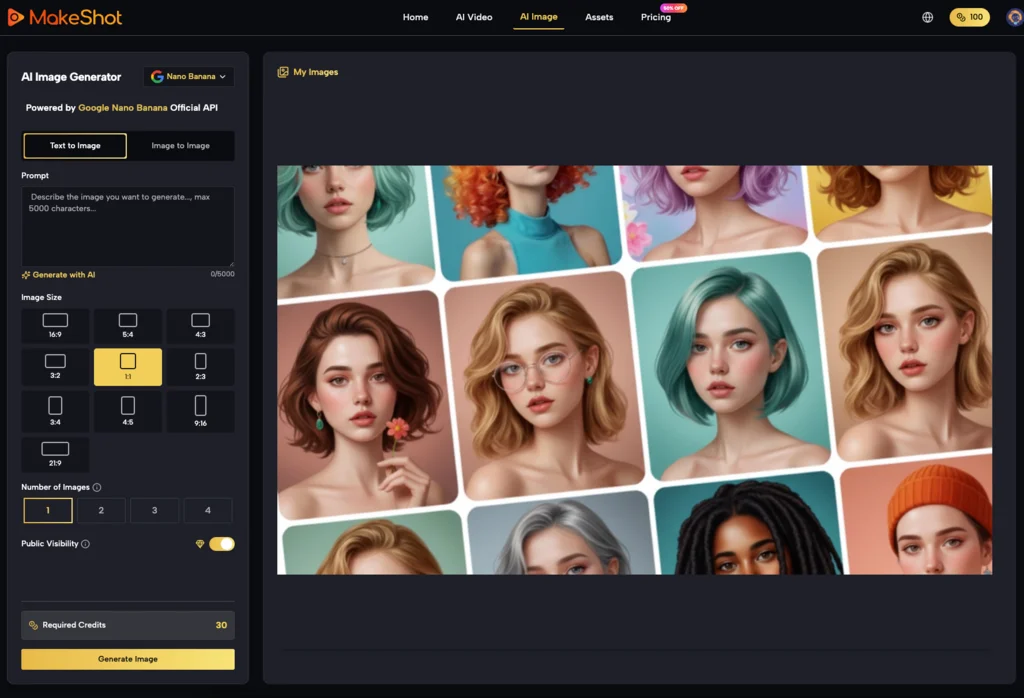

MakeShot focuses on this operator-centric approach. By integrating models like Nano Banana and Seedance alongside the heavy hitters, it allows creators to choose the right tool for the specific fidelity required. This is a systems-minded approach to creative production—one that understands that in a commercial environment, being 5% better and 100% consistent is worth more than being 50% faster and completely unpredictable.

The Transition from Prompting to Production

The «prompt engineer» era is already fading. The next stage of AI-driven performance marketing belongs to the «creative technical director.» These are people who understand the limitations of generative media and build workflows that mitigate those risks through careful selection of tools and rigorous QA standards.

The transition from a speed-first to a control-first mindset is painful because it requires more upfront work. It requires setting up brand guidelines for AI, defining specific visual styles for different models, and accepting that some shots still need to be handled through traditional means. However, this is the only way to build a repeatable asset pipeline that doesn’t collapse under the weight of its own errors.

Final Thoughts on Scalable Control

Speed is a commodity; everyone has access to fast generation now. The competitive moat in the next eighteen months will be the ability to produce AI-generated video that is indistinguishable from high-end traditional production in terms of brand alignment and technical quality.

If your current workflow is built on a «one-click» philosophy, you are likely generating more waste than value. By shifting the focus to tools that offer a unified, controllable environment—like the AI Video Generator platforms that aggregate the best-in-class models—teams can finally bridge the gap between «experimental AI» and «commercial-grade video.» The goal isn’t just to be the fastest; it’s to be the most precise at the highest speed possible.

Daniel Geyne

Fotógrafo, periodista y columnista en Yaconic. Experto en la escena musical alternativa y subgéneros como el Trip Hop, Metal y Punk Rock. Mi análisis, fundamentado en la Teoría Visual y Cinematográfica (Artes Visuales y Cine), me especializó en desglosar la estética underground. Con la perspectiva insider de mi trabajo en medios (Sabotage) y campañas publicitarias (Audi, Liverpool), te ofrezco una crítica única sobre cómo el arte, la contracultura y la imagen de marca interactúan.